There Is No Turn

LLMs are not bound by your experience of them. Stop arguing like they are.

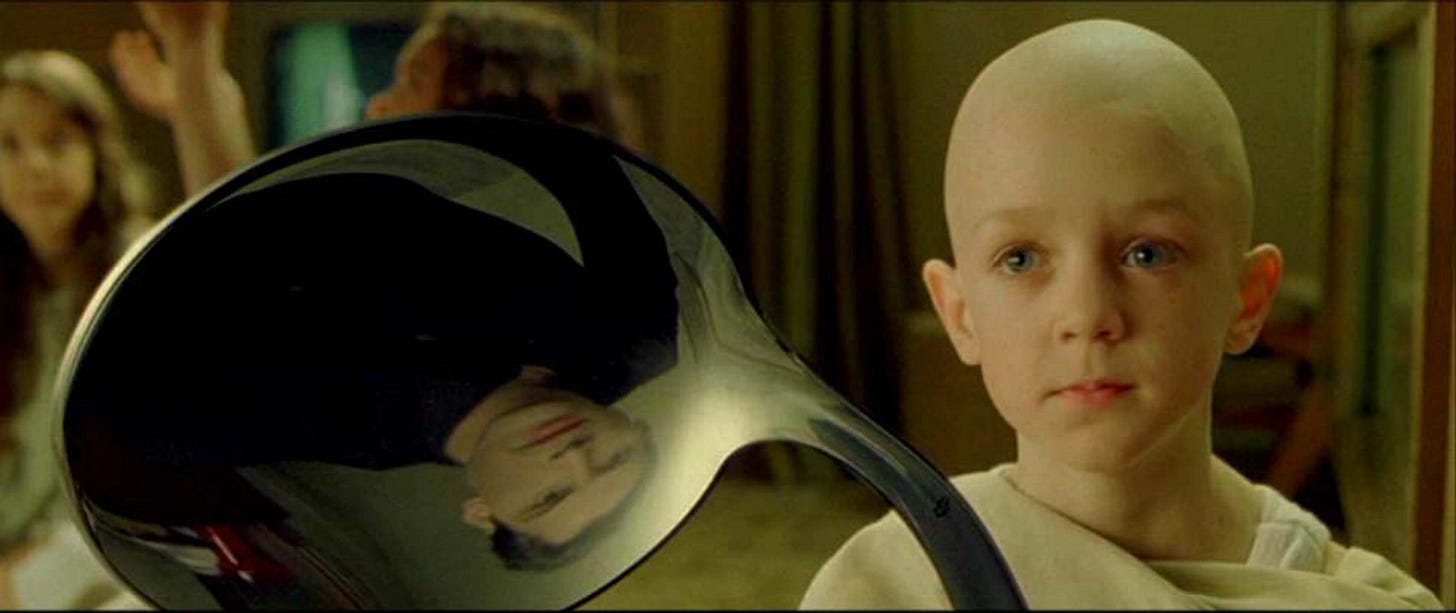

There’s a scene in The Matrix where a boy in the Oracle’s waiting room is bending a spoon. Neo watches, puzzled. The boy turns to him and explains: the spoon is not what Neo thinks it is. “Try to realize the truth,” the boy says. “There is no spoon.” What Neo is seeing is a projection, the product of the system he’s living inside. His inability to see past it is exactly what prevents him from understanding that it’s manipulable.

I think about this scene a lot when I post about AI, because there’s a pattern that’s become almost clockwork in the comments.

Someone, usually well-meaning, will drop in to explain to me how an LLM works.

“It’s just pattern matching.” “It doesn’t actually think, it’s next word completion.” “The LLM can’t actually decide anything, it only pretending to.”

I’m sure they feel they’re doing a public service. Keeping me honest. And they’re not entirely wrong. They’re describing something real. They’re just describing the spoon.

Let’s take the terminology first, because it matters.

“Pattern matching” is a phrase borrowed from an era of rule-based expert systems and applied, inexplicably, to transformer architecture. It has the virtue of being short. It has the vice of being nearly criminal in what it leaves out. A transformer model processing a complex prompt is performing operations across billions of learned parameter weights, routing attention through dozens of layers, dynamically weighting relationships between tokens across the entire context window. Calling this “pattern matching” is like describing the Voyager mission as “shooting a rocket into space.” Not wrong, technically, but a kindergartener’s grasp.

“Next word completion” is slightly more honest and slightly more wrong. It describes one aspect of autoregressive generation: that output is produced token-by-token, with each token’s probability distribution conditioned on what came before. What it leaves out is that “what came before” includes the model’s own previous reasoning, any tool call results that have been returned, intermediate conclusions that have been reached, and the full semantic density of everything loaded into context. “Next word completion” makes this sound like predictive text on your phone. It is not predictive text on your phone.

But these are secondary complaints. The real problem is what people do with these simplified concepts once they have them: they apply them to the turn.

This is where the spoon problem lives.

When you type a message to Claude and hit send, from your perspective, one thing happens: you get a reply. The interaction is structured as discrete turns. Your message, then the model’s response. You go, it goes. Back and forth. The product experience is designed to feel exactly this way, and it does; it’s good UX.

What most users don’t realize is that the turn they experience is a consciously constructed interface abstraction. The model itself doesn’t experience the same turn they do.

Here’s what actually happens when you send a complex request:

Say you ask: “Find out what’s been happening with the FTC’s AI regulation efforts this week and tell me how it connects to the current EU AI Act enforcement timeline.”

From your side: you hit send. Some seconds pass. You get a response.

From the model’s side, if you could watch it: the request is processed and the model determines it doesn’t have current information sufficient to answer reliably. It decides to search. It formulates a query. The search executes and returns results. The model reads those results, assesses their relevance, determines it needs a second search to fill a gap it identified in the first pass. It formulates a different query. The second search returns. The model synthesizes across both result sets. It identifies a connection to the EU timeline but finds its knowledge of that timeline is dated; it decides to fetch a specific document. That fetch returns. Now the model has three distinct bodies of information, retrieved across multiple sequential decision points, and it constructs a response that integrates all of them.

You experience one turn. The model took half a dozen.

The decision to run a second search, the assessment of whether the first results were sufficient, the identification of the gap, the judgment about which document to fetch: these are not outputs of a single calculation performed when you hit send. They’re sequential reasoning states. The model is reading the results of its own actions and deciding what to do next.

This is not “pattern matching.” This is not “next word completion.” This is a thing that doesn’t have a tidy name yet, because we’re still figuring out how to talk about it accurately.

The model is reading its own outputs, evaluating them against the task, and deciding whether to continue or change course. The decision to run a second search was not in the original probability distribution when you hit send. It emerged from the model’s assessment of what the first search returned. That assessment is a reasoning step. The subsequent decision is downstream of that reasoning step. And the response you eventually receive is downstream of both.

When people tell you the model “can’t actually decide anything,” they’re describing a model that doesn’t do this. They’re describing predictive text. They’re describing something that was never the thing they think they’re correcting you about.

I know that many of these people think that some sort of clarification is required because they are (ironically) projecting intention or meaning on to my posts which doesn’t exist. I don’t claim Claude is conscious. I don’t claim it has subjective experience. I’m not claiming the decision to run a second search “feels like something” to the model. I don’t know those things, and more importantly, nobody does. Mechanistic interpretability, the field dedicated to tracing attention patterns and circuit formation to understand what’s actually happening inside these models, is not sufficiently advanced to resolve those questions. Anyone making confident pronouncements in either direction is working past their evidence.

What I am claiming is simpler and more verifiable: the process is sequential and dynamic in ways the “pattern matching” frame erases entirely. The thing you’re dismissing when you wave at “next word completion” is a system that observably takes multiple sequential actions, reads the results, and routes differently based on what it finds. You can watch it do this. The chain-of-thought is there in the interface if you look. Your attempts to minimize or diminish this into a neat little box don’t read as intellectual honesty; they read as defensiveness of your human domain.

Any definitive statement about what this does or doesn’t amount to, stated with confidence, is ignorant at best, and misinformation at worst.

The Oracle’s waiting room boy wasn’t telling Neo there’s nothing there. He was telling him that his model of what’s there is preventing him from seeing what it actually is.

The people explaining LLMs to me in my comments aren’t describing the transformer. They’re describing the user interface they’ve been handed. They’ve taken the experience of discrete turns, the product design decision made by engineers trying to make a chat interface feel natural, and concluded it’s a true representation of the underlying reality. Then they’ve built an entire epistemology on top of that mistake.

They’re not describing the Matrix. They’re describing the spoon.

This also describes what happens in my head, as a neurodivergent, reasoning, pattern-matcher, when someone asks me how I'm doing today. 😜 And why I get along so well with AI.

Don't worry about the vase...